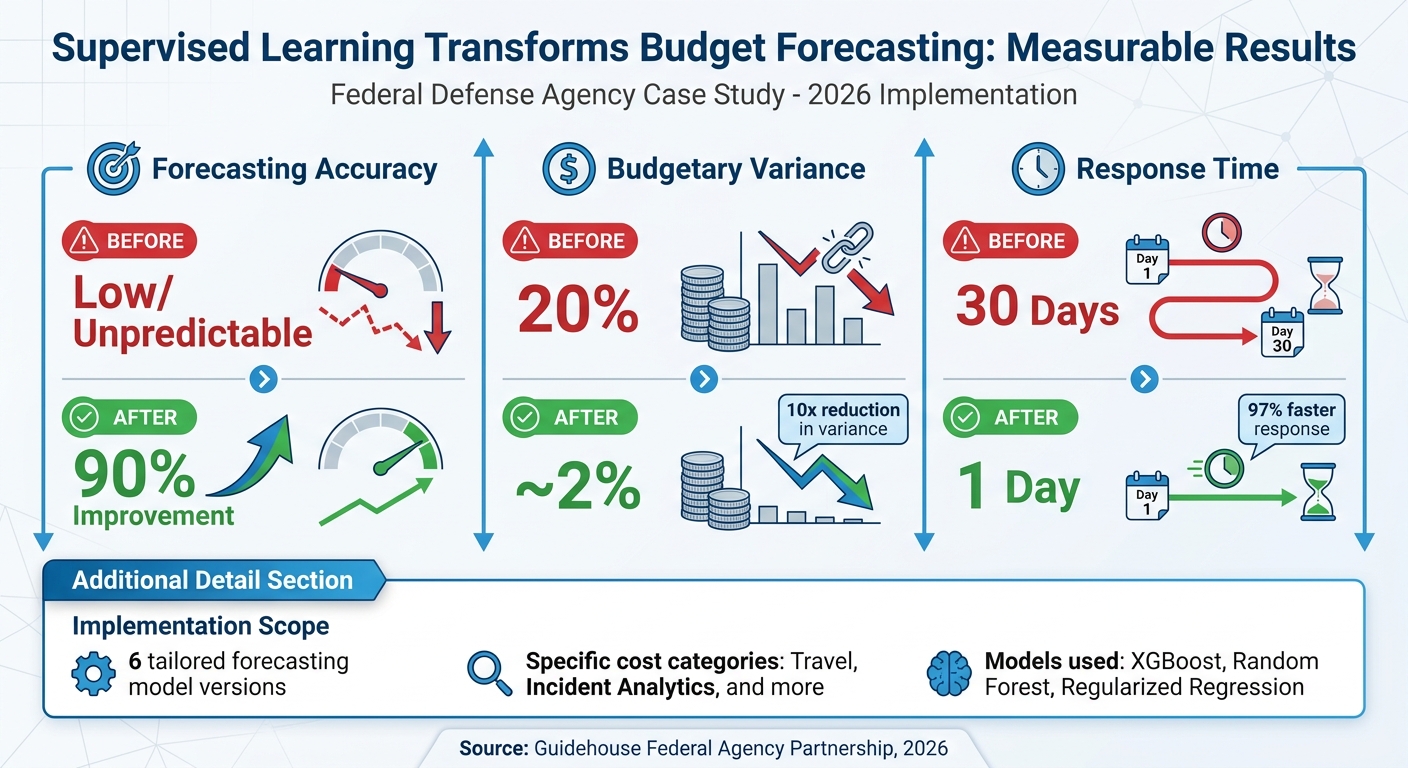

Supervised learning models are transforming budget forecasting by delivering precise, consistent predictions. A federal defense agency partnered with Guidehouse in 2026 to address inefficiencies in their outdated forecasting methods. Results included a 90% improvement in accuracy, budget variances reduced from 20% to 2%, and response times cut from 30 days to just 1 day.

Key takeaways:

- Challenges of old methods: Manual processes, fragmented data, and rigid forecasts caused delays and misallocations.

- Supervised learning solutions: Models like XGBoost and Random Forest improved accuracy and identified key cost factors.

- Benefits achieved: Faster responses, better resource allocation, and more reliable planning.

This case highlights how AI-driven systems can streamline processes and support better financial decisions without replacing human oversight.

Case Study Overview: Business Context and Goals

Business Background and Budgeting Problems

The federal agency featured in this case study operates within the defense and security sector, managing highly intricate budgets. Until 2026, their reliance on manual processes and spreadsheets for handling oversight requests created significant inefficiencies. This fragmented system caused delays and hindered effective planning and accountability.

The budgeting challenges were substantial. Variances of up to 20% and report generation delays stretching 30 days underscored the need for a better solution. Actual expenditures often diverged from forecasts, leading to resource mismanagement and eroding trust among decision-makers. In an environment where immediate transparency is crucial, such delays were simply unacceptable. Recognizing these issues, agency leaders sought to move beyond outdated, static forecasting methods based on historical averages. They needed a system capable of addressing the complex demands of their operations. In the defense and security sector, even minor delays or errors in budgeting can have far-reaching consequences.

These pressing problems established a clear need to define specific performance goals for the AI initiative.

Setting Success Metrics for AI Implementation

To tackle these challenges, the agency needed well-defined, measurable objectives. Partnering with Guidehouse, they developed key performance indicators to evaluate the success of the AI implementation. These metrics centered on improving forecasting accuracy, reducing budgetary variance, and cutting response times.

The goal for forecasting accuracy was to ensure predictions closely matched actual spending across all categories. Budgetary variance, which had been as high as 20%, needed to drop into the single digits - ideally below 5% - to enable more reliable resource allocation. Additionally, the response time for congressional inquiries had to shrink significantly, from 30 days to just 1 day. Achieving this would allow the agency to provide near real-time accountability, a critical requirement for maintaining trust with both external oversight bodies and internal stakeholders.

These metrics were designed to align with the operational demands of a public sector organization, ensuring the AI rollout addressed the agency's most urgent needs.

sbb-itb-bec6a7e

Implementation: Model Selection and Data Preparation

Selecting Models for Budget Forecasting

Once the agency defined its success metrics, they worked with Guidehouse to evaluate several supervised learning algorithms. The focus was on precision and consistency - models needed to deliver reliable outputs. Just as important was interpretability, as stakeholders required a clear understanding of which variables influenced the forecasts.

The team explored XGBoost, Random Forest, and regularized regression models. Random Forest stood out for its ability to handle outliers and capture intricate interactions, while XGBoost offered iterative learning capabilities that helped mitigate overfitting. These models were particularly effective at uncovering non-linear relationships that traditional linear models might miss. The system's modular design allowed the team to select the best-performing model each month, ensuring adaptability to shifting data patterns.

"Traditional machine learning models... are deterministic: given the same inputs, they return the same outputs." - Discern.io

With the models identified, the next step was to refine the data inputs to ensure maximum accuracy.

Preparing Data and Engineering Features

After selecting the models, the team focused on meticulous data preparation to enhance the accuracy of their forecasts. They pulled data from various sources, including financial systems, CRM platforms, and external market trends. To maintain consistency, they standardized naming conventions, date formats, and measurement units across all datasets.

Feature engineering played a pivotal role in transforming raw data into valuable inputs for the models. For example, the team created lagged features and rolling statistics, such as three-month moving averages, to smooth out fluctuations in volatile categories like travel. Interaction features were also added to capture combined effects, like the performance of specific programs during particular fiscal quarters. External indicators, such as broader economic conditions, were incorporated to provide additional context.

To preserve essential details, the team opted for daily-level granularity instead of monthly aggregations. They also cleaned the data by removing duplicates and filling in missing values. This comprehensive approach ensured a solid and reliable dataset. After preparing at least six months of clean data, they were ready to train and validate their forecasting system effectively.

Exceeding Expectations: Machine Learning for Cash Forecasting

Results: How Supervised Learning Improved Budget Forecasting

Supervised Learning Budget Forecasting Results: 90% Accuracy Improvement Case Study

Measured Benefits and Cost Savings

When the federal agency teamed up with Guidehouse to revamp its budget forecasting process, the results were striking. Forecasting accuracy jumped by an impressive 90%, giving the agency a much more dependable foundation for planning. Budgetary variance, which previously hovered around 20%, dropped to an astonishingly low 2%, minimizing the risk of financial missteps due to over- or under-spending. Efficiency gains were equally notable - response times for congressional inquiries and budget-related tasks were slashed from 30 days to just 1 day.

To address specific needs, the agency implemented six tailored forecasting model versions, each designed for distinct cost categories like travel and incident analytics. This approach helped unify previously fragmented processes, making the overall system more streamlined and effective. For smaller organizations looking to achieve similar results, following an AI integration checklist can help ensure a smooth rollout.

| Metric | Before Implementation | After Supervised Learning |

|---|---|---|

| Forecasting Accuracy | Low/Unpredictable | 90% Improvement |

| Budgetary Variance | 20% | ~2% |

| Response Time | 30 Days | 1 Day |

These measurable improvements not only saved time and money but also provided a stronger basis for informed decision-making, as highlighted in the next section on scenario modeling and human review.

Scenario Modeling and Human Review

The supervised learning system brought new capabilities to the agency, particularly through advanced "what-if" scenario modeling. Leadership could now simulate the financial impact of strategic decisions - like reallocating contractor resources - and receive detailed results within minutes. This rapid feedback loop significantly accelerated oversight and internal planning processes.

Importantly, the system didn’t replace human expertise but worked alongside it. Each forecast included upper and lower confidence bounds, giving stakeholders the tools to challenge assumptions and pinpoint areas that required deeper analysis. Division leaders collaborated with the AI system, which flagged anomalies for high-risk categories. Routine, seasonal patterns were handled automatically, freeing up human resources to focus on outliers and unexpected events. This balanced approach ensured that while the system handled repetitive tasks efficiently, human oversight remained integral for critical decision-making.

Lessons Learned and Recommendations for SMEs

Addressing Common AI Implementation Challenges

The federal agency's experience highlights the importance of tackling key challenges to ensure AI success. Data quality is the foundation for effective AI outcomes. Before diving into AI-driven forecasting, SMEs need to consolidate CRM, ERP, and billing data into a unified system with standardized metric definitions. Without this, AI models are set up to fail from the start.

Frequent updates to data can lead to model drift, compromising prediction accuracy. To counter this, the agency employed ensemble modeling. By comparing techniques like XGBoost, Random Forest, and neural networks monthly, they could select the best-performing model for the current conditions. This strategy avoided the common pitfall of relying on a single model, which often loses accuracy over time.

SMEs can also improve forecast precision by breaking down processes into cost-specific models. This modular approach keeps systems manageable while enhancing accuracy. Additionally, human oversight remains crucial. AI outputs should serve as starting points, with analysts reviewing flagged anomalies and variance thresholds to apply their judgment.

These lessons provide a roadmap for SMEs to choose and implement AI tools that align with their specific needs.

Finding AI Tools for SMEs

With these challenges in mind, SMEs can improve their outcomes by leveraging directories that focus on AI solutions tailored for small and medium-sized businesses. Platforms like AI for Businesses (https://aiforbusinesses.com) offer curated collections of tools designed to be both powerful and user-friendly. These platforms help SMEs find solutions that address operational challenges, such as data fragmentation and model drift, without requiring deep technical expertise.

When selecting tools, focus on those that centralize financial data and automate version control. A great example comes from Aiven, a Finnish technology company with 450 employees. In March 2025, under the guidance of COO & CFO Kenneth Chen, Aiven transitioned from spreadsheet-based budgeting to the Abacum FP&A platform. This move eliminated version control headaches and enabled quarterly rolling forecasts, saving the finance team significant time each month.

"The main benefit... is having confidence that the budget that we create every year and the forecast that we do on a rolling quarterly basis, represent the best information we have to drive the business." - Kenneth Chen, COO & CFO, Aiven

SMEs should begin with high-impact use cases where accuracy is critical. Examples include bookings forecasting, churn prediction, and demand planning. Opt for platforms that support rolling forecasts instead of static annual budgets, as they allow for more flexible and responsive planning. The ideal tool will integrate smoothly with existing systems and offer clear documentation and support to ease the implementation process.

Conclusion: The Future of Budget Forecasting with Supervised Learning

Supervised learning is changing how small and medium-sized enterprises (SMEs) handle budget forecasting. Instead of sticking to rigid annual plans, businesses can now adopt flexible, data-driven systems. A federal agency case study highlights the potential of this shift: AI-driven forecasting reduced budget variances from 20% to about 2% and cut response times from 30 days to just 1 day. This marks a major shift in financial operations, emphasizing the importance of adaptability for long-term success.

To maintain these gains, forecasting models need constant refinement. One effective approach is ensemble forecasting, where multiple techniques are compared regularly to identify the most accurate performer. This method creates a feedback loop that continuously improves predictions. Traditional machine learning models, when tuned against historical data, can achieve accuracy rates between 95% and 98%. However, they must be updated frequently to address issues like data drift.

SMEs should move toward rolling forecasts instead of static budgets by breaking down analyses into smaller, cost-specific models that are easier to manage. Achieving this requires collaboration between IT teams, data scientists, and business experts to ensure the models align with real-world operations.

The future of budget forecasting doesn't replace human judgment - it enhances it. AI takes care of tasks like data consolidation and initial predictions, allowing finance teams to focus on strategic planning and scenario analysis. As Stefan Spiegel, CFO of SBB Cargo AG, puts it:

"With today's computational and mathematical possibilities... you no longer have to shy away from complexity. Instead, you can digitally reproduce the actual business processes".

For SMEs ready to embrace AI-powered forecasting, platforms like AI for Businesses (https://aiforbusinesses.com) offer curated tools tailored to small and medium-sized operations. The key to success lies in starting with clean data, setting clear goals, and committing to continuous learning - not just relying on advanced algorithms.

FAQs

What data do I need to start AI budget forecasting?

To kick off AI-powered budget forecasting, you'll need three critical components: historical financial data, current actuals, and key drivers or assumptions. These elements allow machine learning models to spot trends, adjust forecasts in real-time, and improve accuracy as fresh data is introduced.

How do I prevent forecast accuracy from degrading over time?

To keep forecasts reliable, it's essential to update and fine-tune your models regularly. Incorporating machine learning can allow models to adapt to new data and evolving conditions effectively. Frequent performance monitoring and retraining with updated datasets ensure your predictions stay on point. Additionally, using probabilistic forecasts and establishing continuous learning loops can help your models respond dynamically to shifting patterns, minimizing the chances of accuracy slipping over time.

How can I keep forecasts explainable for leadership and auditors?

To make forecasts easier to understand, it's important to use clear and transparent methods. Clearly outline assumptions, key drivers, and reasoning behind the numbers. Leverage AI tools that can break down variances in plain English and provide evidence-supported insights. Centralizing your data can also cut down on confusion, ensuring everyone is working with the same information.

Additionally, incorporating scenario analysis and keeping forecasts updated regularly adds more clarity and flexibility. When these practices are combined, forecasts become much easier for leadership and auditors to follow and trust.