AI systems can transform how businesses operate, but they also introduce risks like bias, unpredictable behavior, and compliance challenges. For small and medium-sized enterprises (SMEs), managing these risks is critical to avoid data breaches, fines, and reputational damage. Pre-designed templates simplify this process, offering structured frameworks to assess and mitigate risks efficiently.

Key Takeaways:

- AI risk assessment identifies vulnerabilities in AI systems, focusing on technical, ethical, and legal risks.

- Regulations like the EU AI Act and NIST AI RMF are pushing businesses toward stricter compliance.

- Templates streamline risk management, covering areas such as bias testing, data governance, and third-party vendor risks.

- Tools like the Australian AI Impact Assessment Tool, Canada’s Algorithmic Impact Assessment, and the NIST AI Framework provide clear steps for SMEs.

AI Use Case Video: Risk Assessment

sbb-itb-bec6a7e

What is AI Risk Assessment?

AI risk assessment is the process of systematically identifying potential harms, vulnerabilities, and failure points in AI systems. It takes into account the unpredictable and evolving nature of machine learning models to determine the right mitigation strategies. This focused approach is crucial because AI introduces risks that traditional IT frameworks simply can't handle.

Unlike standard cybersecurity measures, AI risk assessments go deeper. They tackle issues like algorithmic bias, safety failures, adversarial attacks, data quality problems, and broader societal impacts. The process involves several key steps: documenting the AI system, identifying risks across technical, operational, ethical, and legal domains, assessing the likelihood and impact of these risks, reviewing existing controls, and laying out new actions to address any gaps.

Regulations are also ramping up. Frameworks like the EU AI Act now mandate Fundamental Rights Impact Assessments for high-risk AI systems before they can be deployed. In the U.S., regulators are following suit. As of September 2023, the CFPB requires lenders using AI to provide clear, specific reasons for adverse credit decisions - vague responses like "the model said no" are no longer acceptable. Rebecca Leung, Founder of RiskTemplates, highlights the urgency:

"AI risk is moving faster than most organizations' governance programs. Regulators aren't waiting."

Conducting a thorough AI risk assessment doesn’t just help meet regulatory requirements. It also ensures operational reliability and builds stakeholder trust by proactively addressing risks and incorporating human oversight where needed. The message is clear: failing to assess risks is no longer an acceptable excuse for harmful or discriminatory AI outputs. These foundational steps set the stage for using the templates discussed in the next sections.

7 AI Risk Assessment Templates for Businesses

For small and medium-sized enterprises (SMEs) aiming to make AI risk management easier, these templates offer practical, ready-to-use solutions. Covering technical, ethical, and compliance areas, they help businesses identify risks early. Some tools, like those from the Canadian and Australian governments, are even available under open licenses for public use and sharing.

-

AI Impact Assessment Tool (Australia)

Created by the Australian Government, this tool is based on AI Ethics Principles and uses a risk-scoring system across 12 assessment sections. It starts with a threshold evaluation to decide if a full review is necessary, followed by a detailed analysis of principles like fairness, safety, privacy, and accountability. -

SafeAI-Aus Checklist

This checklist includes eight sections that address data governance, security, human oversight, and ongoing monitoring. It uses a quantitative risk scoring system (Probability × Impact on a 1–5 scale), making it particularly useful for high-stakes applications like loan approvals, hiring decisions, or medical diagnoses. -

OWASP Top 10

From the Open Web Application Security Project, this checklist focuses on identifying vulnerabilities specific to AI systems. It’s especially useful for addressing technical security concerns and protecting systems from potential attacks. -

Algorithmic Impact Assessment Tool (Canada)

This Canadian tool is a mandatory questionnaire for automated decision-making systems. It includes 65 risk questions and 41 mitigation questions, categorizing systems into four impact levels based on scores: Level I (0–25%), Level II (26–50%), Level III (51–75%), and Level IV (76–100%). Systems with mitigation measures exceeding 80% receive a 15% score reduction. -

NIST AI Risk Management Framework

Developed by the National Institute of Standards and Technology, this framework is a cornerstone for modern AI risk assessments. It ensures systematic testing, evaluation, and bias checks. A "Generative AI Profile" is included to address risks tied to large language models and generative systems. -

Risk Management Guide and Compliance Frameworks

This framework uses a scoring method where scores of 16–25 are flagged as critical and require executive-level approval before moving forward. -

AI Vendor Risk Assessment Questionnaire

Designed to evaluate third-party AI vendors, this template highlights compliance gaps and risk areas. It covers vendor dependencies, fallback strategies, update schedules, and supply chain risks. It’s especially helpful for monitoring third-party models and ensuring they don’t degrade over time.

These tools provide SMEs with accessible ways to tackle AI risks. Stay tuned for the next section, which will guide you on how to put these frameworks into action.

How SMEs Can Implement These Templates

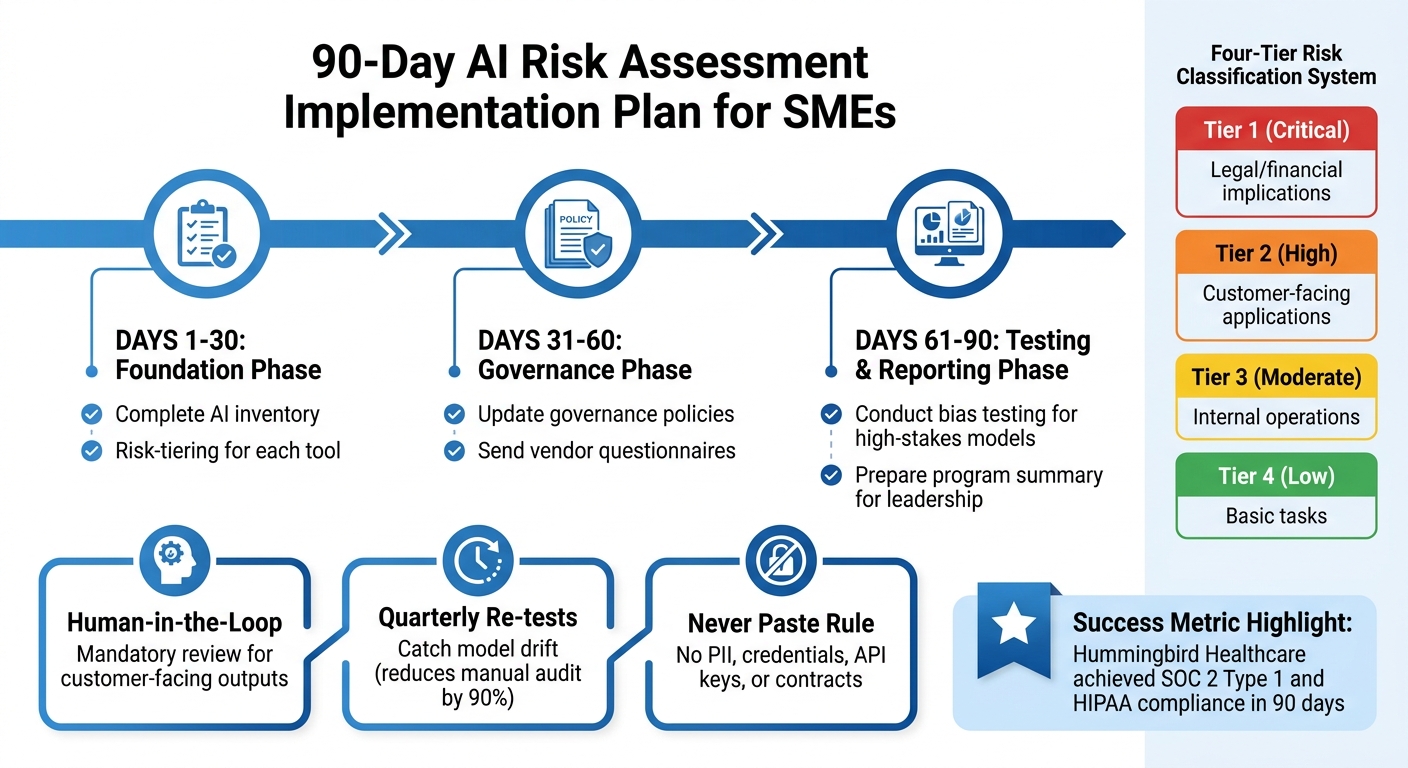

AI Risk Assessment Implementation Timeline for SMEs: 90-Day Action Plan

The first step is to align the template with your risk level. For businesses handling sensitive data, such as those in healthcare or finance, a Privacy-First approach is essential. This means enforcing strict input rules and setting mandatory retention limits. On the other hand, for less critical operations, a simpler "Starter Pack" can suffice. This might include basic safeguards, like a one-page use policy and a strict "never paste" rule for passwords or API keys. The beauty of this approach? It can be implemented in just a few hours. By classifying your systems upfront, you ensure your efforts are concentrated where they matter most.

To streamline assessments, categorize your AI tools using a four-tier risk system. Not all tools demand the same level of scrutiny. For example, a grammar checker poses much less risk than a loan approval algorithm. Here's a suggested breakdown:

- Tier 1 (Critical): Tools with legal or financial implications.

- Tier 2 (High): Customer-facing applications.

- Tier 3 (Moderate): Tools for internal operations.

- Tier 4 (Low): Those handling basic tasks.

Once classification is complete, assign ownership and map out a custom AI implementation plan. Take inspiration from Hummingbird Healthcare, which achieved SOC 2 Type 1 and HIPAA compliance in just three months by using automated frameworks and clear accountability measures. For SMEs, a practical timeline could look like this:

- Days 1–30: Complete an AI inventory and risk-tiering for each tool.

- Days 31–60: Update governance policies and send vendor questionnaires.

- Days 61–90: Conduct bias testing for high-stakes models and prepare a program summary for leadership.

For customer-facing or public outputs, introduce a "Human-in-the-Loop" rule. This ensures compliance claims, customer communications, and public statements undergo human review before release. Governance only works if people follow it, so make this a non-negotiable step. To maintain standards, perform quarterly re-tests to catch model drift, which can reduce manual audit workloads by as much as 90%.

Finally, leverage your risk classification to maintain a centralized registry of approved tools. This registry should include details like each tool's purpose and risk profile. Enforce critical rules, such as prohibiting the pasting of customer PII, credentials, API keys, or sensitive contracts into tools. In the event of an incident, halt operations immediately, document the issue, contact the vendor to delete affected data, assess the customer impact, and revise your policies accordingly.

Conclusion

AI risk assessment isn't about holding back progress - it’s about moving forward responsibly. The templates highlighted here provide SMEs with a structured way to uncover risks that traditional IT assessments might overlook, such as algorithmic bias or adversarial attacks [1,4]. Instead of navigating the complex regulatory environment from scratch, these frameworks simplify compliance with standards like the EU AI Act and ISO/IEC 42005, breaking them down into actionable, easy-to-use checklists [3,6].

These tools are designed for speed and practicality. Automated governance platforms, for instance, can handle over 65% of repetitive workflows. Meanwhile, pre-built templates allow businesses to establish baseline governance measures in just a few hours. Whether you’re managing critical financial systems or using AI for internal operations, these resources ensure you maintain the necessary oversight without slowing things down. They align perfectly with the earlier action steps for quick and effective implementation.

As regulations tighten, "we didn’t assess it" won’t hold up as an excuse. Using these templates, SMEs can minimize audit risks, avoid costly penalties, and build trust with customers and partners alike.

Don’t wait. Choose a template that fits your risk profile, assign clear responsibilities, and commit to regular reviews. With the right framework, you won’t just meet regulatory demands - you’ll turn responsible AI practices into a strength, ensuring innovation continues while fostering trust through thoughtful risk management.

FAQs

Which AI risk template should I start with?

Start with the AI Impact Assessment Guide & Template from RiskTemplates. This comprehensive framework dives into key areas like risk classification, data and model risks, fairness, explainability, and monitoring. It's especially useful if you're focused on meeting regulatory requirements.

If you're short on time, the AI Risk Assessment Checklist from SafeAI-Aus might be a better fit. This tool offers a structured process that takes just 1-2 hours to help identify and address potential risks.

For ongoing risk management, consider using the AI Risk Register Template, which is designed for continuous tracking and updates.

How do I decide if an AI tool is high-risk?

To figure out whether an AI tool falls into the high-risk category, you need to look at the potential harms, weaknesses, and broader societal effects it could have over its entire lifecycle. Pay special attention to risks such as algorithmic bias, safety failures, security flaws, and privacy breaches.

AI systems used in critical sectors like healthcare or finance - or those subject to strict regulations, such as the EU AI Act - are often considered high-risk. These tools demand careful evaluation and steps to reduce their risks.

How often should we redo an AI risk assessment?

AI risk assessments should be done on a regular basis, with an annual review being a good starting point. This helps account for any changes in the workplace or systems. However, if there are major updates or unexpected issues, conducting assessments more often can help ensure potential risks are identified and properly addressed.