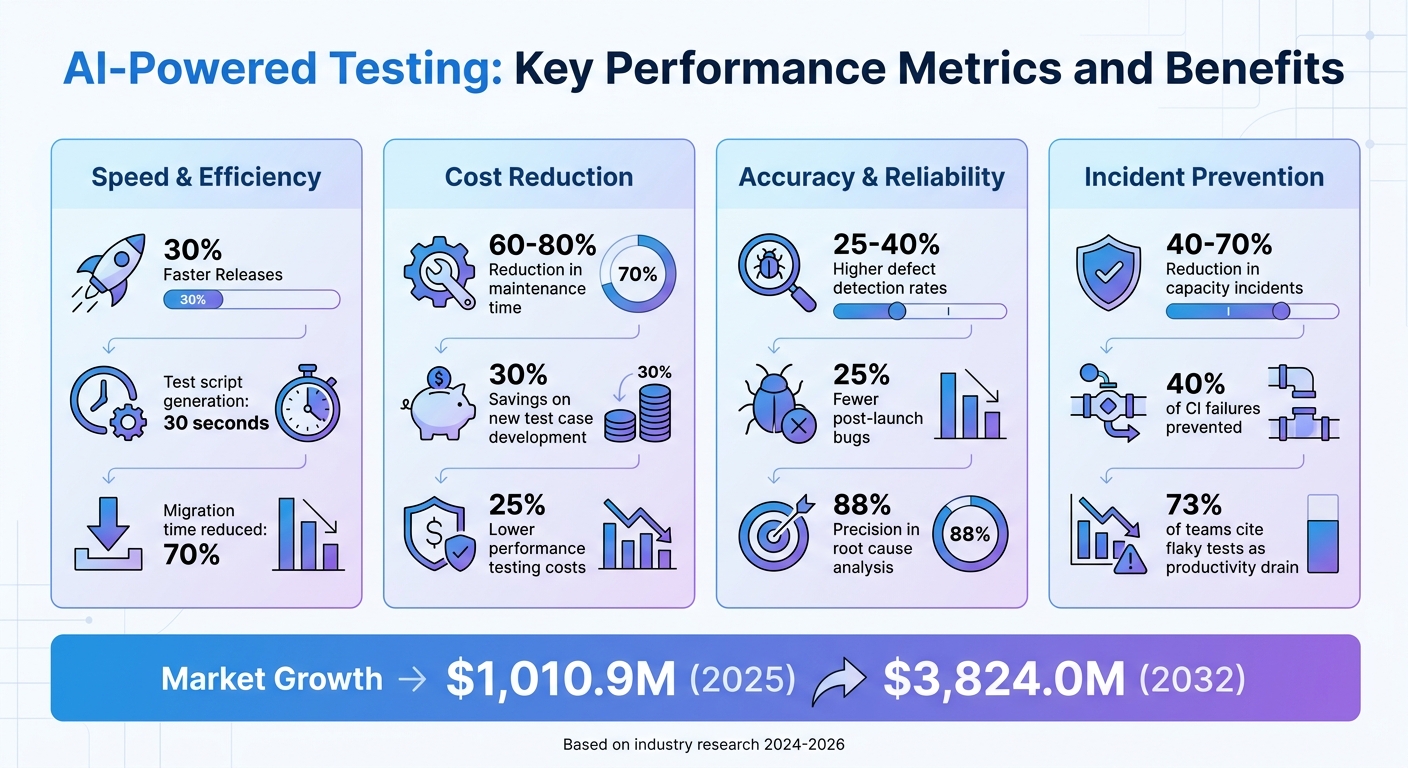

AI is transforming scalability testing by automating repetitive tasks, predicting system failures, and improving test efficiency. It creates test scripts in seconds, updates them automatically when applications change, and identifies performance bottlenecks before they disrupt systems. For businesses, this means faster releases, fewer bugs, and reduced costs. Key takeaways include:

- Automated Test Scripts: AI generates scripts from plain-language descriptions and maintains them as systems evolve.

- Predictive Analysis: AI uses historical data to forecast capacity issues, reducing incidents by up to 70%.

- Self-Healing Tests: When scripts fail, AI fixes issues in real time, cutting maintenance time by 60-80%.

- Improved Accuracy: Defect detection rates increase by 25-40%, ensuring more reliable software.

AI-powered tools save time, reduce manual effort, and deliver actionable insights, making them essential for modern software testing.

AI-Powered Testing: Key Performance Metrics and Benefits

Demo Bytes: Apply Auto-Scaling and Load Testing to your AI Applications

sbb-itb-bec6a7e

AI-Powered Test Script Creation and Updates

AI has revolutionized the way test scripts are created and maintained, making testing more efficient and adaptable. By automating script generation and updates, AI addresses the challenges of manual testing, especially as applications grow and change. Traditionally, manual test scripting has been a time-consuming hurdle for development teams. Now, AI can generate automation scaffolding in about 30 seconds, saving valuable time and effort.

Creating Test Scripts Automatically

AI tools, powered by Large Language Models, can turn plain-English descriptions into ready-to-use test scripts compatible with frameworks like Playwright, Selenium, and JMeter. Instead of writing complex code, teams simply describe their testing needs in natural language, and the AI takes care of the technical details and repetitive coding tasks.

For scalability testing, AI doesn’t stop at basic script creation. It analyzes browser recordings to automatically add think times, label interactions, and parameterize user data for diverse scenarios. Additionally, it detects dynamic values in web traffic and suggests correlations to prevent script failures during high-scale testing.

"Creating reliable load tests shouldn't require hours of manual scripting. With AI-assisted load test authoring... you can go from a simple browser recording to a production-ready JMeter script in minutes".

- Nikita Nallamothu, Microsoft

To enhance script reliability, AI relies on the browser’s accessibility tree - using roles, labels, and ARIA attributes - rather than fragile CSS or XPath selectors. This approach focuses on user intent rather than the application’s UI structure, making the scripts more robust and less prone to breaking.

Once created, these scripts adapt seamlessly to changes in the application, ensuring they remain effective over time.

Updating Test Scripts Automatically

AI takes script maintenance to the next level by automatically adapting to application changes. In traditional testing, minor updates - like renaming a button, repositioning a panel, or altering a workflow - can cause scripts to fail. AI healing agents monitor these failures, identify the root causes, and automatically update selectors and interaction paths, minimizing the need for manual intervention.

As of April 2026, TestRail’s AI Test Script Generation is available for free to all Cloud customers during its open beta period. Already, over 50,000 QA professionals worldwide are using AI-driven tools to streamline their testing workflows. This shift allows teams to move away from manual scripting and embrace low-cost, reliable test execution in CI/CD pipelines.

Using AI to Predict Performance Bottlenecks

AI is transforming scalability testing by shifting the focus from reacting to problems after they occur to addressing them before they arise. Instead of waiting for systems to crash under heavy loads, AI leverages historical performance data to predict when and where bottlenecks are likely to happen. This forward-thinking approach can cut capacity-related incidents by 40-70% within the first six months of implementation.

By analyzing patterns in CPU usage, memory, disk I/O, and network traffic, AI tools - such as LSTM networks and Facebook's Prophet library - identify trends that might escape human attention. These include seasonal spikes, time-of-day fluctuations, and marketing-driven surges. Using this information, AI projects future demand and estimates when system resources might hit their limits.

Learning from Past Performance Data

AI dives into production telemetry to understand how users interact with systems in the real world. It examines factors like click paths, session durations, geographic usage patterns, and peak activity times. This data helps train models that simulate workloads reflective of actual system stress. Additionally, AI correlates infrastructure metrics with application logs, identifying slow-developing issues such as memory leaks while predicting when these problems might lead to outages.

"AI capacity planning changes the equation by shifting from reactive monitoring to predictive resource management. Instead of waiting for problems to surface, you're modelling future demand and provisioning ahead of it." - Exponential Tech

Traditional systems rely on static thresholds, like triggering an alert when CPU usage exceeds 80%. In contrast, AI-powered tools can provision additional resources hours ahead of predicted peaks, factoring in confidence intervals to ensure systems remain stable and prepared. This proactive approach helps pinpoint bottlenecks before they disrupt performance.

Finding Bottlenecks Before They Cause Problems

Using insights from historical data, AI can identify bottlenecks before they escalate. For example, Observe.ai implemented the One Load Audit Framework (OLAF) to optimize its machine learning infrastructure. Under the leadership of Aashraya Sachdeva, Director of Engineering, the team integrated OLAF with Amazon SageMaker to detect bottlenecks in inference pipelines. Automating the analysis of latency, CPU, and memory metrics allowed Observe.ai to shrink its performance testing cycle from a week to just a few hours.

This growing reliance on AI in testing is reflected in the global market, which is predicted to expand from $1,010.9 million in 2025 to $3,824.0 million by 2032. Companies adopting AI-driven testing tools report a 34% reduction in test maintenance time and a 25% improvement in defect detection rates. Reinforcement learning-based test agents further reduce performance testing costs by up to 25% compared to traditional methods.

For teams looking to implement predictive bottleneck detection, a good starting point is auditing the past 12 months of capacity incidents. This helps identify which issues were predictable versus unexpected. Focus on the 5-10 environments where capacity challenges have the highest business impact, and ensure historical metrics are accurate and consistent before building models. Regular retraining is crucial, as user behavior and application architecture evolve over time, potentially impacting model accuracy. This method strengthens AI's role in scalability testing, enabling faster and more precise performance management.

Self-Healing Features in AI Testing

When test scripts break during scalability testing, traditional methods often require manual fixes, which can slow down the process. AI-powered self-healing changes the game by automatically identifying and resolving issues like broken element locators or failed connections, keeping tests running smoothly. This is critical because 40% of CI pipeline failures are caused by test flakiness, not actual bugs, and 30% of QA engineering time is spent on test maintenance rather than expanding test coverage. This automated recovery system is a game-changer, and here's how it works.

At the heart of self-healing lies a process called element fingerprinting. During test creation, AI assigns a unique "fingerprint" to UI elements. This fingerprint is based on multiple attributes, such as visible text, tag names, CSS classes, ARIA labels, DOM position, and context. If a test fails because an element locator changes - like a renamed ID - the self-healing mechanism kicks in. Instead of halting the test, the AI scans the current DOM, calculates similarity scores for potential matches, and picks the best candidate once it meets a confidence threshold. The locator updates in real time, allowing the test to continue seamlessly.

This approach is more advanced than simple auto-retry, which just re-executes failing actions. Self-healing actively adapts to changes by finding new ways to identify elements when the original locator no longer works. Teams using self-healing frameworks have reported 60-80% reductions in maintenance time. It also tackles a major challenge: 73% of engineering teams cite flaky tests as a significant drain on productivity.

"A test that passes for the wrong reason is more dangerous than a test that fails loudly. Silent wrong heals erode the fundamental trust in your test suite." - Mahima Sharma

To maintain trust in these systems, self-healing must be treated as an auditable process, not an invisible fix. Most frameworks log every "heal" event, detailing what changed - whether a button was moved or a class name was updated - so teams can review these adjustments later. Combining self-healing with auto-retry creates a powerful combo: self-healing addresses broken locators, while auto-retry tackles intermittent issues, covering different aspects of test instability. Additionally, focusing on behavior-based tests (e.g., "Submit the registration form" instead of "Click ID=123") gives AI more flexibility to locate elements, enhancing the effectiveness of self-healing. This capability strengthens AI's role in ensuring reliable and scalable testing processes.

Main Benefits of AI in Scalability Testing

AI-powered scalability testing offers a game-changing approach to quality assurance. By automating processes and introducing self-healing capabilities, AI enables organizations to achieve 30% faster releases while cutting down on manual effort and test maintenance tasks. These improvements aren't just about speed - they transform the way teams detect and address defects. With AI-enhanced methods, defect detection rates jump by 25% to 40%, and companies automating at least half of their testing cycles see 25% fewer bugs reported after launch.

Another major advantage is cost efficiency. AI-driven automation drastically reduces the time spent on test maintenance, allowing QA teams to focus on expanding test coverage. Debugging also becomes far more efficient - AI can pinpoint and resolve root causes in just minutes. Over time, these efficiencies compound: teams save 30% on developing new test cases and reduce the time needed to migrate automation frameworks by 70%.

AI also stands out for its cross-platform capabilities. Unlike traditional tools that rely on static DOM structures and often fail when UI elements change, AI uses computer vision to identify elements by their visual and semantic characteristics. This means a single test framework can seamlessly operate across desktop, web, and mobile platforms. Enterprise AI tools take it a step further by running parallel tests across 1,500+ browser and operating system configurations simultaneously. What once took weeks of regression testing can now be completed in just hours. This adaptability ensures reliable performance even under varying load conditions.

"Artificial intelligence, deep learning, machine learning - whatever you're doing if you don't understand it - learn it. Because otherwise, you're going to be a dinosaur within three years."

– Mark Cuban, American Businessman

To better understand the advantages, here's a comparison of standard testing methods versus AI-powered approaches:

Standard vs. AI-Powered Testing Methods

| Feature | Standard Testing | AI-Powered Testing |

|---|---|---|

| Speed | Manual scripting; lengthy regression cycles | Autonomous generation; 30% faster release times |

| Accuracy | Brittle locators; prone to human error | Self-healing scripts; 25–40% better defect detection |

| Cost | High maintenance overhead; scales with headcount | 70% less manual effort; reduced maintenance |

| Flexibility | Platform-specific; requires custom frameworks | Cross-platform reuse via Vision AI |

| Diagnostics | Root-cause analysis takes days | AI-driven insights in minutes |

| Maintenance | Manual updates for every UI change | Automated updates handle nearly all UI changes |

These capabilities make AI-powered testing a powerful tool for scalability testing, ensuring systems are not only efficient but also resilient under varying conditions.

Case Studies and Research Findings

Real-world examples highlight how AI is making a measurable difference in scalability testing. Across industries, businesses are seeing time savings, cost reductions, and improved reliability by using AI-driven testing frameworks.

Moving AI Models from Testing to Production

Salesforce encountered a major scalability issue, managing 6 million daily tests that led to 150,000 failures every month. In October 2025, Senior Engineer Manoj Vaitla and his team introduced the "Test Failure Triage Agent" to handle this overwhelming volume. Using FAISS-based semantic search and large language model (LLM) reasoning, they cut test failure resolution time by 30%, reducing the average resolution time from seven days to just two or three. What once took months was now accomplished in just 4–6 weeks, thanks to AI-powered tools.

"The mission of our Platform Quality Engineering team is to serve as the last line of defense... The TF Triage Agent provides developers with concrete recommendations within seconds."

– Manoj Vaitla, Senior Engineer, Salesforce

Airbnb faced a different issue: migrating nearly 3,500 test files from Enzyme to React Testing Library. Engineer Charles Covey-Brandt led a team that used a pipeline of LLMs with retry loops and dynamic prompting. In just four hours, they migrated 75% of the files, reaching 97% completion within four days. The entire migration was completed in six weeks, compressing what would have been a multi-year project into weeks.

These examples illustrate how AI is transforming complex workflows, paving the way for its application in intricate areas like microservices testing.

AI in Microservices Testing

AI's potential shines even brighter in microservices testing. Uber Technologies showcased how AI can handle the intricate interdependencies in such environments. A single trip request in Uber's system interacts with 500 services and involves over 30,000 RPC calls. Starting in Q1 2024, Uber combined "DragonCrawl" with "uHavoc" to test mobile resilience across its Rider, Driver, and Eats apps. This setup executed over 180,000 automated chaos tests, saving around 39,000 hours of manual effort. It also uncovered 23 resilience risks, including 12 severe issues that could have disrupted key operations. Additionally, AI-powered root cause analysis achieved 88% precision, cutting debugging time from hours to just minutes.

Meanwhile, a leading US-based CRM platform partnered with SourceFuse to migrate over 2,000 regression scenarios to a multi-tenant AWS architecture. By using GitHub Copilot along with a proprietary "AI Healer", the project achieved zero defect leakage into production, reduced QA cycles by 40%, and saved 30–40% in test design and execution time.

Conclusion

AI is reshaping scalability testing by moving beyond manual scripts and reactive troubleshooting. With features like autonomous test generation, self-healing mechanisms, and smarter prioritization, tasks that once took days - like root-cause analysis - can now be completed in minutes. This allows developers to tighten feedback loops and deliver more dependable software faster.

The numbers speak for themselves: organizations leveraging AI-driven testing report a 34% reduction in test maintenance time, a 70% cut in manual testing efforts, and 25%–40% higher defect detection rates, enabling product releases that are up to 30% faster.

"AI isn't just the future of QA. It's the present. From autonomous test generation to adaptive test healing, AI offers unparalleled efficiency gains, scalability, and accuracy." – Javier Bastante, Functionize

For small and medium-sized enterprises (SMEs) and growing businesses, AI levels the playing field. Gone are the days when large QA teams and hefty budgets were a necessity. Take, for example, a 2019 case study by Kim et al., where a manufacturing SME implemented an AI-driven machine monitoring system using open-source tools and affordable hardware - all for under $500. This highlights how AI-powered testing is not just for industry giants; it’s accessible and impactful for businesses of all sizes.

AI is transforming testing from a bottleneck into a strategic advantage. By proactively identifying performance issues, adapting to changes in real time, and delivering actionable insights, AI-powered scalability testing empowers businesses to grow with confidence. It's not just automation - it’s intelligence - redefining how modern software is developed and tested.

FAQs

What data is required for AI-based scalability predictions?

AI-driven predictions for scalability rely heavily on data from key workload metrics. These include latency, throughput, error rates, resource usage, and overall system performance under increasing loads. By analyzing these metrics, AI models can evaluate system behavior and estimate how effectively it can handle shifts in demand.

How do self-healing tests avoid hiding real bugs?

Self-healing tests leverage AI to adjust to changes in a user interface by interpreting various signals, such as visual appearance, context, position, ARIA roles, and text content. By moving away from static selectors, this method helps identify whether an issue is a true bug or simply a routine update to element locators.

How can a small team add AI to scalability testing in CI/CD?

Small teams can effectively incorporate AI into scalability testing by leveraging AI-driven test selection and infrastructure management. Here's how it works:

- Smarter Test Selection: AI can analyze code changes and predict which tests are most relevant, eliminating the need to run the entire test suite. This not only saves time but also accelerates continuous integration (CI) workflows.

- Optimized Resource Management: AI tools can dynamically allocate resources, enable auto-scaling, and reduce infrastructure costs. This ensures testing remains efficient and reliable, even during periods of heavy workload.

By using these AI capabilities, teams can streamline their testing processes without compromising on quality.