When you use AI tools like ChatGPT, your sensitive data - trade secrets, proprietary code, and more - could be at risk. Many standard NDAs don’t stop vendors from using your information to train their models, which can create legal and competitive risks. By 2025, 34.8% of employee inputs into ChatGPT included sensitive data, up from 11% in 2023, highlighting the growing threat.

Here’s how to protect your business:

- Prohibit AI training: Add clauses to your contracts that explicitly ban vendors from using your data for model training.

- Data retention rules: Require vendors to delete all data, including derived assets like embeddings, when the contract ends.

- Technical safeguards: Insist on encryption, data isolation, and no-human-review clauses to secure your inputs.

- Vendor transparency: Demand notifications for model updates, data breaches, and new sub-processors.

These steps can help you avoid costly mistakes, like one healthcare firm’s $3 million contract loss due to improper AI data use. Contracts tailored for AI risks, like AI Addendums, are your best defense. Regular NDAs won’t cut it.

1. AI Confidentiality Clauses in Standard NDAs

Data Retention Restrictions

Most traditional NDAs fall short when it comes to addressing data uploaded to AI systems. To ensure your proprietary information is protected, your NDA should explicitly require the deletion of all input data, prompts, and any derived assets - such as embeddings or logs - when the agreement ends. Additionally, it should mandate that customer prompts be kept separate from all other data. Without clear rules on this, fragments of your sensitive information could linger in the vendor's systems.

Data isolation is another critical factor. If a vendor cannot guarantee that your data will remain completely separate, there’s a real risk that your competitive insights could unintentionally influence another client’s AI-generated outputs. These safeguards pave the way for more detailed restrictions on how your data is handled in AI training.

Prohibitions on AI Training

A key gap in many NDAs is the lack of specific language about AI model training. If your agreement doesn’t explicitly prevent the vendor from using your data for training purposes, you should assume that they might. To close this loophole, your NDA must clearly forbid vendors from using your data to enhance their models.

"If a vendor retains the right to mine your prompts for training, they are using your content." - Jana Gouchev, Founder, Gouchev Law PLLC

Include clauses that prohibit training, commingling of data, and require immediate deletion of all inputs. These provisions should ensure that your prompts, data structures, or product plans cannot be used to train general-purpose or commercial AI models. It’s also essential to define user prompts as confidential information and state that any derivative data - like embeddings, fine-tuning outputs, or logs - remains your property. Extend these restrictions to subcontractors and sub-processors, as these third parties might otherwise use tools with fewer safeguards. Tightening these clauses helps eliminate risks tied to AI data misuse.

Technical Safeguards

Contractual commitments won’t mean much without enforceable technical measures. Your NDA should require robust protections like encryption (both in transit and at rest), data isolation, and logical separation of your environment from other customers. Additionally, demand documented evidence of these safeguards.

"Make sure input is encrypted and isolated and will be deleted on termination." - Jana Gouchev, Founder, Gouchev Law PLLC

Since standard Master Service Agreements rarely address AI-specific risks, many companies are now adopting standalone AI Addendums. These documents focus on essential areas like technical safeguards, training rights, and model updates. They should also include permanent deletion protocols and align with relevant regulations, such as the Colorado Artificial Intelligence Act or HIPAA for industries handling sensitive data.

Vendor Transparency

Technical controls and contractual restrictions are only part of the solution - vendor accountability is equally important. Your NDA should require vendors to notify you of any significant updates to their models or training data, especially if these changes could impact security or performance. This level of transparency helps you stay ahead of potential risks tied to evolving AI systems.

Finally, review your existing NDAs for any mention of terms like "machine learning", "automated systems", or "AI." If these terms are missing, it’s a strong indicator that your agreement needs to be updated.

sbb-itb-bec6a7e

AI Contracts 2025: Is the Data You Upload to an AI Product Really Safe ? Our Take on AI Data Use

2. Advanced AI Clauses for Business Contracts

Expanding on standard NDA measures, advanced AI clauses in business contracts set stricter timelines, detailed protocols, and stronger protections - essential for high-risk industries.

Data Retention Restrictions

Define exact retention periods for all types of data, including prompts, completions, conversation logs, metadata, system logs, and derived data like embeddings, vector representations, and fine-tuned model weights. Avoid ambiguous terms like "up to 30 days", as they leave room for misinterpretation. For sensitive fields like healthcare, legal, or financial services, aim for Zero Data Retention (ZDR), ensuring data is deleted immediately after processing.

Keep in mind that derived data can outlive the deletion of raw data, potentially retaining proprietary information within the vendor's systems. To safeguard this, demand the deletion of all derived data upon contract termination, with written confirmation as proof. These specific rules on retention set the stage for more effective training limitations.

"A trust page can be updated at any time, without notice, with no obligation to you. Your signed enterprise agreement is the only document that creates enforceable obligations." - Redress Compliance

Prohibitions on AI Training

Include explicit terms that forbid the use of your inputs, outputs, or derived data for training, fine-tuning, or algorithm optimization without your written consent. Tighten the definition of "feedback" to exclude proprietary content, trade secrets, and user corrections to AI outputs. Extend these restrictions to all sub-processors, as third parties may operate with less stringent safeguards.

Technical Safeguards

Require robust technical measures to protect your data. This includes encryption for data at rest and in transit, adhering to ISO/IEC 27001 or NIST CSF standards, private network access via Virtual Networks (VNet) and private endpoints, and role-based access controls. For highly sensitive tasks, secure a "no-human-review" clause to ensure vendor employees cannot view your prompts or completions.

Additionally, request annual third-party SOC 2 Type II audits, thorough documentation of data isolation and incident response protocols, and API-level logging with a minimum of 12 months' retention. These technical safeguards work hand-in-hand with transparent vendor practices to protect your data.

Vendor Transparency

Transparency is critical. Insist that vendors maintain an updated list of sub-processors, provide 30–60 days' written notice before engaging new ones, and allow 60–90 days for changes in processing practices. Include a right to terminate the agreement if protections are weakened.

For production environments, require at least 60 days' notice before the vendor changes a model version, giving you time for internal testing and validation. Also, demand breach notifications within 48–72 hours of discovery. These transparency measures, combined with prompt notifications, ensure vendors remain accountable and compliant with your specified safeguards.

Pros and Cons

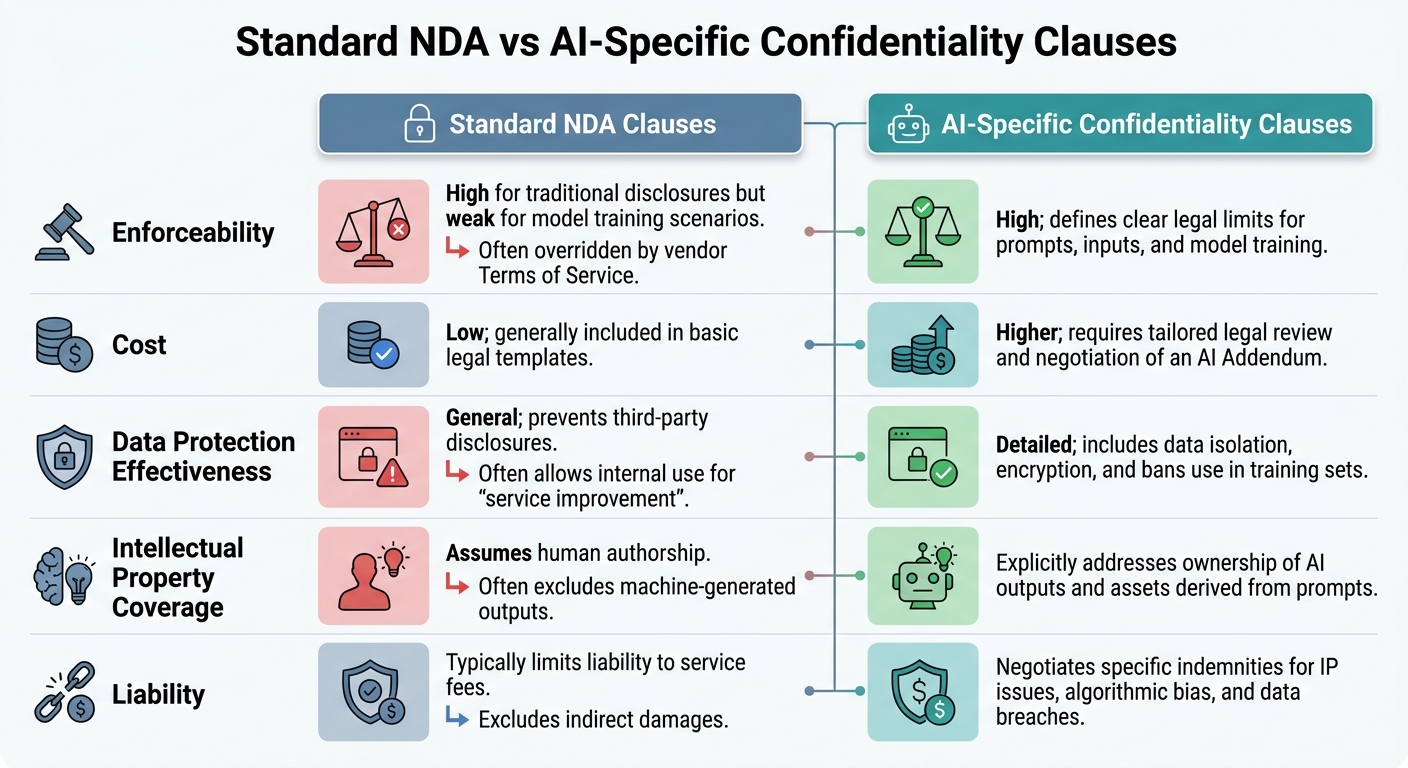

Standard NDA vs AI-Specific Confidentiality Clauses Comparison

Understanding the upsides and downsides of each contractual approach is key, especially given the vulnerabilities discussed earlier. Standard NDAs are inexpensive and easy to access, making them a common choice. However, they often fall short when it comes to addressing AI-specific risks, such as model training rights. This gap can allow vendors to legally use your inputs to refine their systems, which has already led to multimillion-dollar losses and regulatory penalties for some companies.

On the other hand, AI-specific clauses, typically added through an AI Addendum, provide stronger safeguards. However, these come with higher costs for legal review and negotiation. A major sticking point in AI contracts is data usage rights - 44% of companies have reported discovering training data clauses they never explicitly approved.

Here’s a side-by-side look at how standard NDAs and AI-specific confidentiality clauses compare:

| Feature | Standard NDA Clauses | AI-Specific Confidentiality Clauses |

|---|---|---|

| Enforceability | High for traditional disclosures but weak for model training scenarios, often overridden by vendor Terms of Service | High; defines clear legal limits for prompts, inputs, and model training |

| Cost | Low; generally included in basic legal templates | Higher; requires tailored legal review and negotiation of an AI Addendum |

| Data Protection Effectiveness | General; prevents third-party disclosures but often allows internal use for "service improvement" | Detailed; includes data isolation, encryption, and bans use in training sets |

| Intellectual Property Coverage | Assumes human authorship; often excludes machine-generated outputs | Explicitly addresses ownership of AI outputs and assets derived from prompts |

| Liability | Typically limits liability to service fees and excludes indirect damages | Negotiates specific indemnities for IP issues, algorithmic bias, and data breaches |

This comparison underscores the importance of tailoring terms to your business’s size and spending power. Smaller companies often have to accept standard terms with limited room for negotiation. Larger organizations, however, can secure enterprise-level protections, such as zero-retention commitments and comprehensive IP indemnification. Before signing any agreement with an AI vendor, it’s crucial to ensure that client obligations align with vendor license terms.

Conclusion

The move from standard contracts to confidentiality clauses tailored for AI is becoming increasingly critical. Traditional NDAs, originally meant for human-to-human interactions, often fail to address the complexities of data handled by automated AI systems. Without these specific protections, vendors could use your prompts to train their models, incorporate proprietary information, and expose your organization to regulatory risks that go beyond standard liability limits.

"The AI Addendum is where the real protection lives. Your MSA won't cover what matters most." – Jana Gouchev, Founder, Gouchev Law PLLC

Consider the cautionary tale of a healthcare staffing firm in late 2025. The company lost a $3 million annual contract, incurred $380,000 in legal expenses, and was forced to notify over 3,000 candidates - all because their vendor agreement lacked AI-specific compliance clauses. The vendor had improperly used candidate health data to train its AI model, leaving the firm without any contractual protections.

To avoid similar pitfalls, ensure that all future AI vendor agreements include key safeguards: explicit no-training provisions, clear ownership definitions for AI-generated outputs, opt-out options for human review of sensitive data, and strict zero data retention policies. Remember: contracts are the only enforceable protection; vendor trust pages can change without warning.

Taking proactive steps to update contracts now can close existing vulnerabilities. This is a vital part of a broader AI integration checklist for organizations. With 73% of agency agreements still missing AI-specific clauses as of 2026, businesses that act promptly will secure both compliance and a competitive edge.

FAQs

How can I tell if my NDA lets an AI vendor train on my data?

When reviewing your NDA, pay close attention to any clauses that may give the vendor permission to use your data for AI training. Look for terms such as data ownership or training rights, as these might be subtly included in standard agreements. These sections typically specify if and how your data can be utilized for training purposes.

What AI data must be deleted at contract end (including embeddings and logs)?

At the conclusion of a contract, any AI-related data - such as embeddings and logs - must be erased. The time frame for retaining this data depends on the provider. For example, OpenAI removes conversations and temporary chats within 30 days. Logs are securely stored only for as long as necessary to meet legal requirements and are not kept permanently.

What AI security terms should be non-negotiable in a vendor contract?

When drafting AI security agreements, certain terms are a must-have to protect your interests. These should cover:

- Data rights and ownership: The contract must clearly define who owns the data and outline the rights associated with it. This ensures your business retains control over its proprietary information.

- Restrictions on data use and training: Specify how your data can and cannot be used, particularly when it comes to training AI models. This prevents unauthorized use of your data for purposes beyond the agreed scope.

- Service level agreements (SLAs): Include guarantees for uptime and performance to ensure the AI system meets your operational needs consistently.

- Indemnification clauses: Protect your business from potential liabilities by requiring the provider to take responsibility for any issues arising from AI-generated outputs.

These terms are critical for safeguarding your business while leveraging AI solutions.